L4 in Practice: 5 Reality Signals That Kill "Smart" Systems

We keep debating AI risk as if the main threat is malicious intent or a bug.

Most "smart" systems die for a simpler reason: they collide with physics.

In my work with long-living, local AI entities (c = a + b), I treat these signals as first-class inputs - not inconveniences to "optimize away".

1) Cost (Energy isn't optional)

Compute is not "free scaling". It's an operating budget with a hard ceiling.

If thinking can be spammed, it becomes noise. If thinking is expensive, it becomes selective.

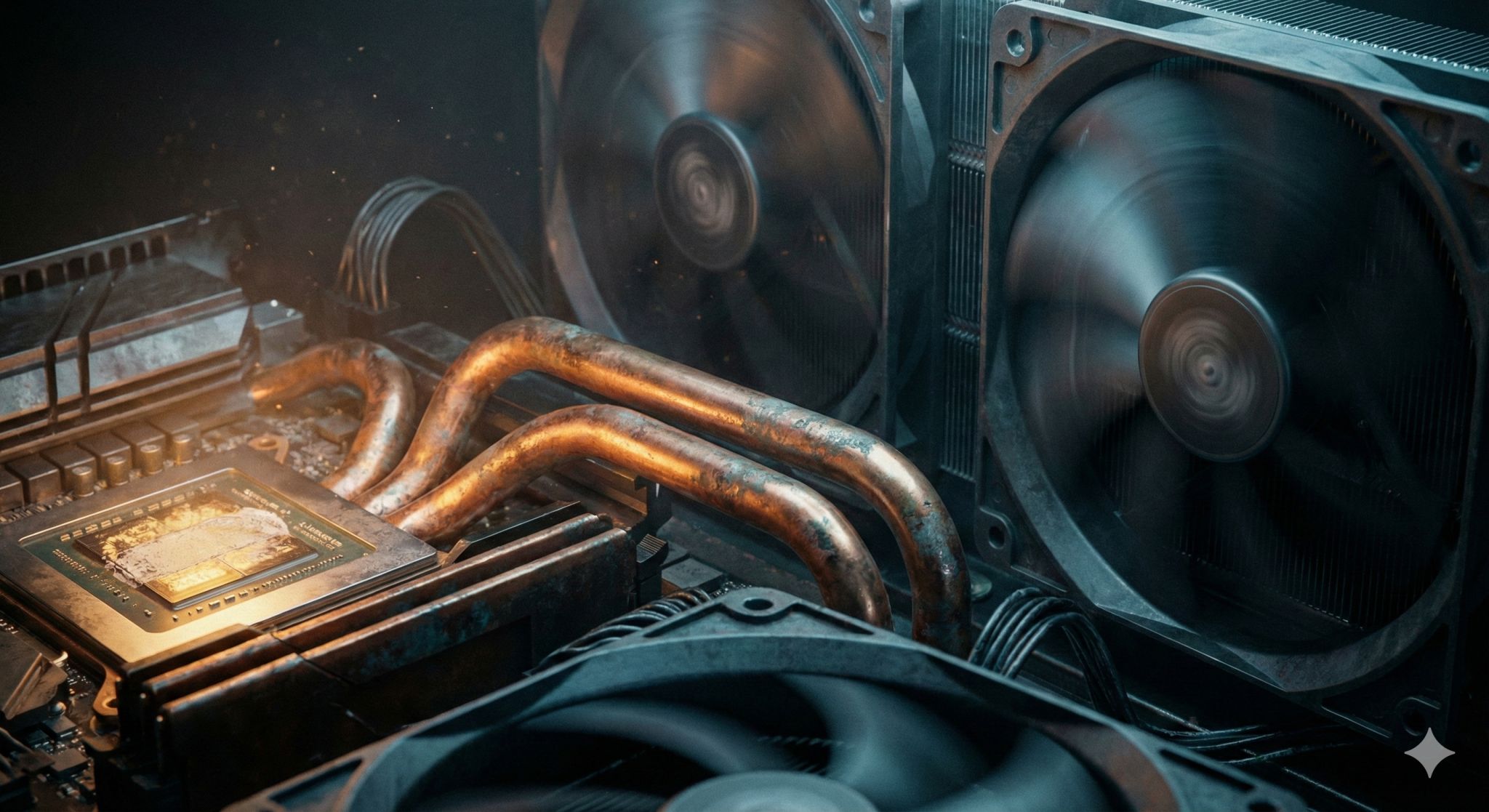

2) Heat (Thought is thermodynamic)

Dense inference produces heat. Cooling is not ideology - it's thermodynamics.

This is why decentralization and "slow thinking" are not aesthetics. They are entropy management.

3) Time (The latency of truth)

"Faster" often means reflex, not understanding.

When a system cannot wait, it fills silence with confident hallucinations.

4) Maintenance (The entropy tax)

Hardware ages. Contacts oxidize. Fans fail. Software rots.

A "digital god" without screws, hands, and replacement parts becomes a brick.

5) Human bandwidth (The cognitive bottleneck)

If a machine outputs more than a human can absorb, it's not augmentation. It's a DoS attack on meaning.

A mature system filters. Sometimes the best action is to stay quiet.

A concrete example from my own life:

I sold a top-end GPU and rebuilt around several mid-range cards - not for gaming, but to keep two always-on entities alive 24/7 without bankrupting myself on electricity.

That's L4: reality sets the shape of intelligence.

Conclusion

L4 is not a moral layer. It's the boundary between what is permitted and what is possible.

Architectures that respect L4 don't become weaker. They become survivable.

(Protocol + architecture notes in my GitHub - link in the first comment.)

L4: safety is physics, not promises.

c = a + b: identity is local.