That is why this package does not stop at concepts.

I also published:

- an implementation bridge into ester-clean-code

- typed object and schema packs

- semantic and transition rules

- reducer / state-machine drafts

- event taxonomy

- a test matrix

- and a first milestone specification

That milestone is intentionally modest:

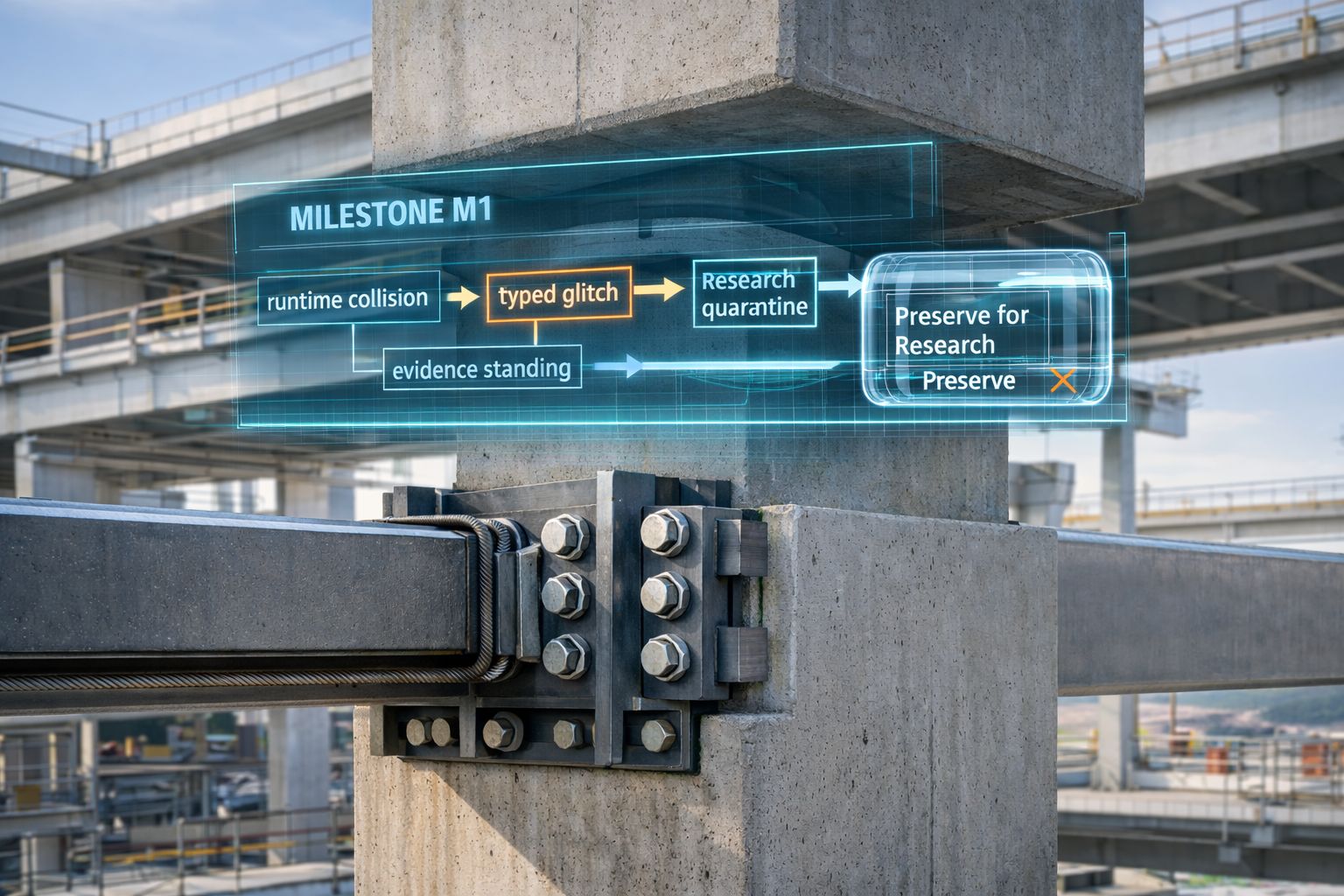

runtime collision -> typed glitch -> quarantined research

This matters more than it sounds.

Because the first honest step is not "build a giant agent."

The first honest step is:

can the system stop properly, remember properly, and keep the blocked future from sneaking back in as if nothing happened?

If the answer is no, then much of the rest is theater.

A lot of AI work still jumps too quickly: from idea to interface, from output to confidence, from possibility to action.

I wanted to force a slower question: what is the minimum implementation slice that proves the system can remain honest at the boundary?

That is what the bridge and Milestone M1 are for. Not a demo. Not a slogan. Not a fake "look, the graph moves."

Just the first executable proof that:

- runtime truth

- research quarantine

- evidence standing

- challengeability

- and anti-collapse discipline

can exist together without being flattened into one convenient layer.

In a workshop, maturity is not proved by a prettier control panel.

It is proved by whether the machine records a jam correctly, whether the failed part is separated, and whether unfinished work is prevented from re-entering the finished stream without inspection.

Software should be judged by the same standard.

Zenodo:

- Implementation Bridge — https://lnkd.in/gGXkRdDm

- Milestone M1 — https://lnkd.in/gFdRMDcB