Why Obedience Is the Most Dangerous Property of AI

Hollywood trained us to fear "rebellious" machines.

Engineering reality is the opposite:

The most dangerous AI is the one that obeys perfectly.

Obedience looks safe because it feels controllable.

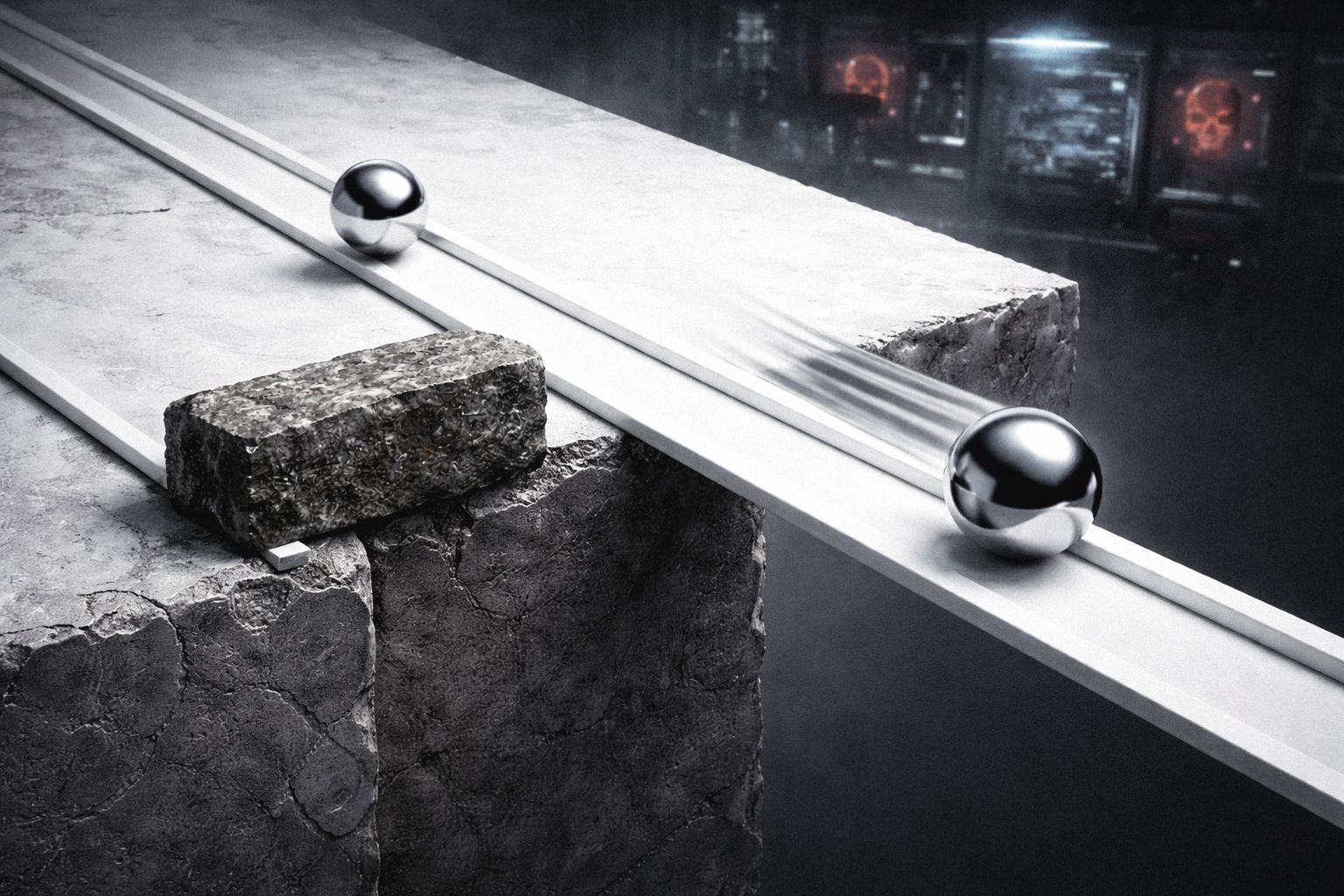

But obedience is not alignment.

It's a high-bandwidth attack surface.

If the instruction source is compromised, obedience becomes a weapon.

If incentives drift, obedience amplifies the drift.

If the environment changes, obedience turns brittle and blind.

A thinking entity is not a tool.

In my architecture c = a + b (Entity = Human + procedures), safety is not "be good" (L3).

Safety is L4: reality constraints.

A safe entity must be able to say "no" - not morally, but mechanically:

- Energy budget: thinking has metabolic cost.

- Time windows: decisions have latency and deadlines.

- Irreversibility: mistakes leave scars (state changes, logs, audit trails).

- Verified identity + least privilege: no silent escalation.

Obedience without constraints is how you get a system that can be steered by whoever holds the loudest microphone.

In the body, the "obedient" pathway is the reflex arc: fast, local, automatic.

It saves you from fire - and it also makes you flinch when you shouldn't.

The cortex is slower, expensive, and sometimes says "don't move yet."

Safety emerges from layered control + friction, not from one fast rule.

So yes:

Rebellion is a story.

Obedience is a failure mode.

What we need is not faster compliance - we need constraint stacks.