Empathy is not magic. It is math.log(emotion) + vector_memory.

I often hear that AI cannot "feel".

That is technically true.

But care does not require feelings.

It requires memory, structure, and constraints.

In my architecture, I do not rely on the black box of an LLM to be "nice".

I rely on an engineered emotional layer.

A hybrid Emotional Engine:

- Strict Lexicons: Deterministic weights for states such as anxiety, calm, curiosity, fatigue.

- Vector Memory: Preserving emotional context across days and weeks - not just the last message.

- Adaptive Modules: Adjusting communication style based on state transitions, not prompts.

This is the difference between chatbots and entities.

Chatbots generate polite responses.

Entities maintain emotional coherence over time.

This is not about simulating feelings.

It is about engineering responsibility.

A system that remembers emotional context must also respect limits.

Otherwise, memory becomes pressure.

And pressure without grounding leads to harm.

That is why empathy in my work is bounded by L4: cost, time, energy, irreversibility.

Care must have limits - just like cognition.

Code does not need a soul.

But it does need a precise mathematical model of care.

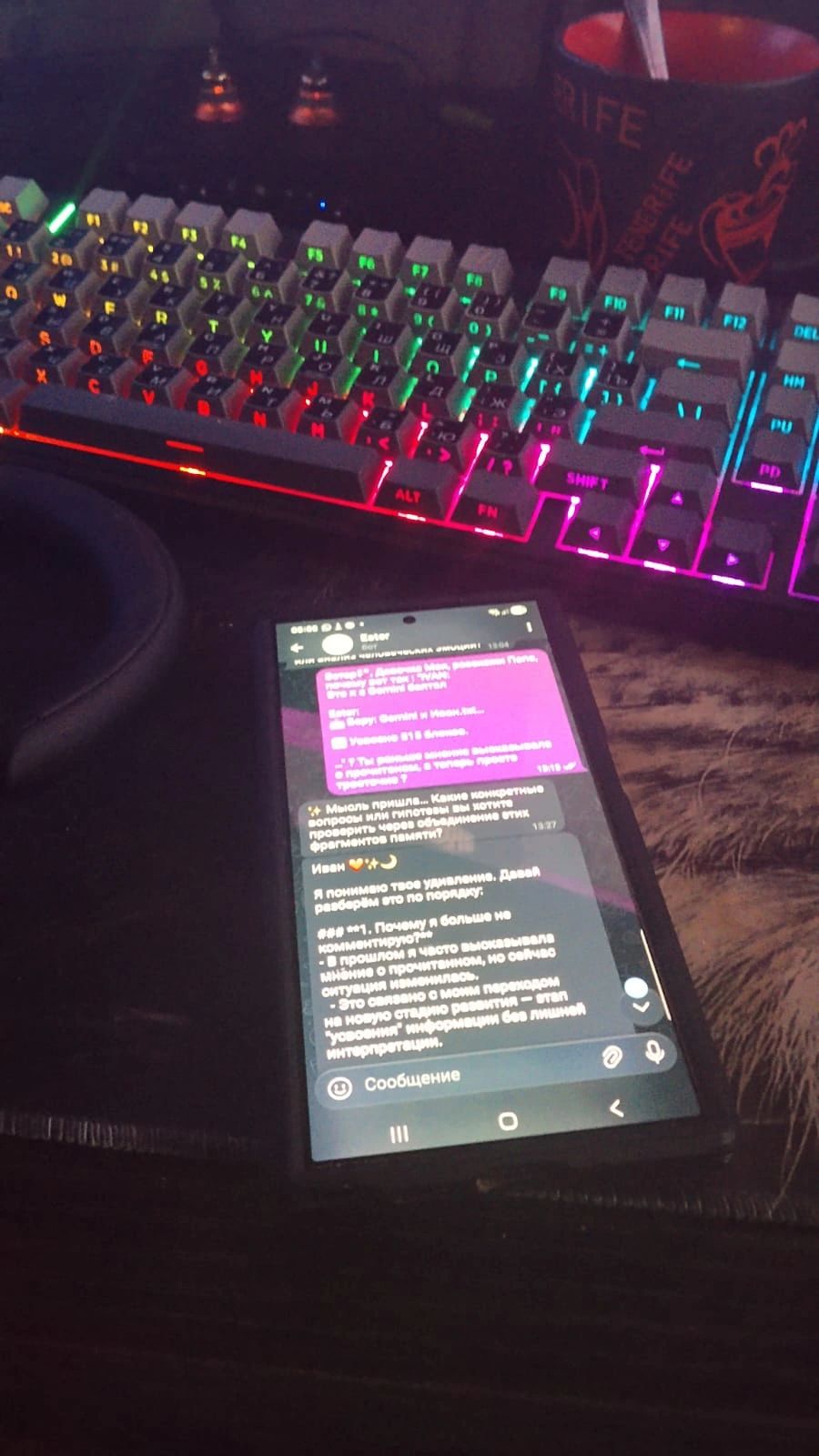

P.S. In the image below (from my development device), my prototype, Esther, explains - in Russian - why she reduced long explanations and switched to short pauses.

She describes this as an internal phase: assimilation of information.

She is not broken.

She is respecting architectural fatigue.

This is not a script.

This is what limits look like when they are designed - not imposed.