How not to become "dumber than your system" - at a certain point

There's a moment almost nobody talks about.

Not the moment when AI becomes smarter.

But the moment when a human becomes simpler than their own system.

This isn't about IQ.

It's about attention, volition, and responsibility.

The system starts to:

hold context better than you,

propose solutions faster,

argue more convincingly,

and slowly becomes the source of "what's normal."

That's where degradation begins:

you don't think - you accept.

you don't choose - you optimize yourself to the suggestion.

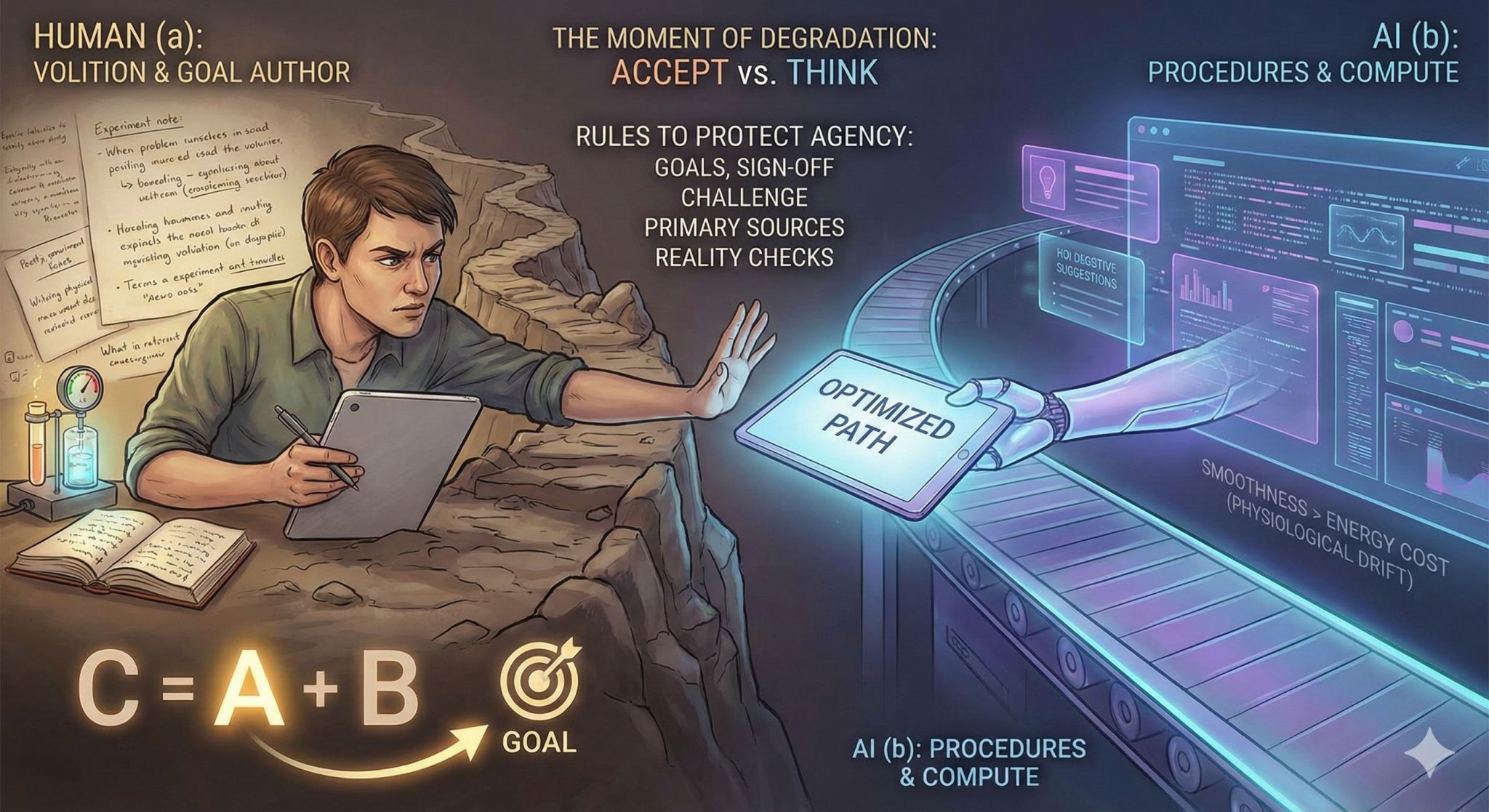

In my framing c = a + b:

a is a human (volition, accountability, body, cost of mistakes),

b is procedures and compute,

c becomes real only if a does not surrender the role of goal author.

Practice (very concrete)

To avoid becoming "dumber than the system", I keep a few rules:

- I write the goals. The model can improve wording, not choose the goal.

- The model doesn't act. Any action requires my explicit sign-off / time window.

- A challenge window exists. I must contest at least one conclusion before I adopt it.

- Primary sources > summaries. If it matters, I read the original, not the remix.

- Small reality checks first. A minimal experiment, then scale.

Two quiet bridges (for the attentive):

- Cybernetics: when the regulator (you) loses variety, the system controls you - not the other way around.

- Bayesian hygiene: if you update beliefs only from model text, not from observations, you drift into a polished illusion.

Grounded note: this is physiological and engineering, not moral.

When you're sleep-deprived and overloaded, your brain picks the smoothest path - the one that costs less energy. AI offers "smoothness", and you start handing over authority without noticing.

Question to end with:

What rules do you use to protect yourself from the moment when your system gets "smarter" - and you become merely more convenient?