We keep pushing for larger context windows.

100k tokens. 1M tokens. Soon - more.

But context length is not understanding.

And memory is not reality.

A model can hold a million tokens and still rot intellectually if those tokens are detached from the physical world.

This is why L4 matters.

L4 is not about rules.

Not about alignment.

Not about morality.

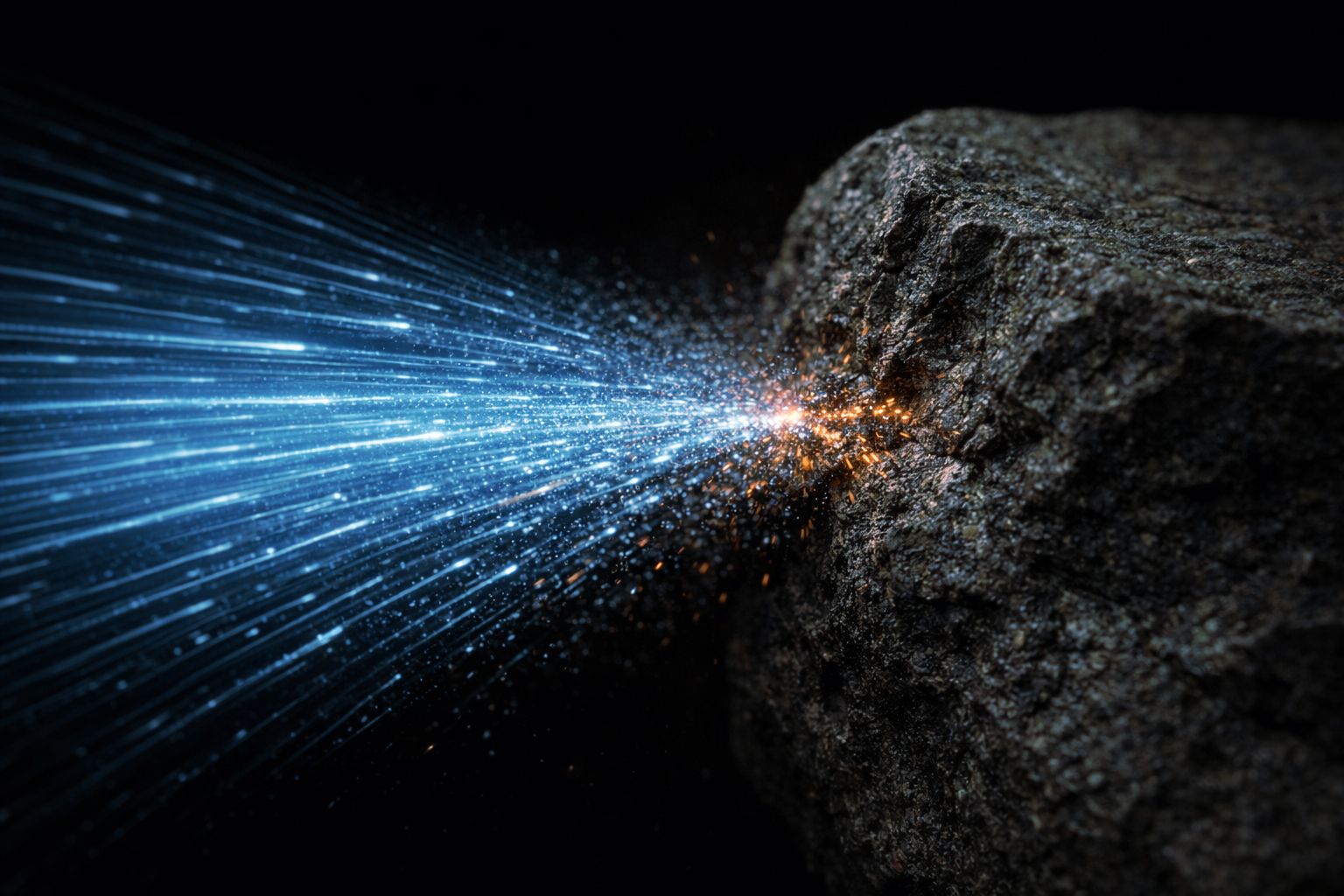

L4 is physics: cost, time, energy, irreversibility.

Reality introduces friction.

Friction introduces consequence.

Consequence introduces meaning.

Without L4, intelligence does not grow.

It smooths out.

It averages.

It collapses inward.

That is not superintelligence.

That is insulation.

More tokens won't save intelligence from itself.

Reality will.