Why "Faster" Is Not "Better" - and Why Asimov Was Solving the Wrong Problem

Isaac Asimov gave us the Three Laws of Robotics.

They were elegant.

Reassuring.

And perfectly suited for machines that execute instructions.

But they were written for tools.

Not for thinking entities.

The core assumption behind the Three Laws is speed and obedience:

- Act fast.

- Obey immediately.

- Prevent harm by hard-coded rule.

This works for automation.

It works for a CNC machine.

It fails for cognition.

Thinking entities - human or artificial - do not become safer by acting faster.

They become brittle.

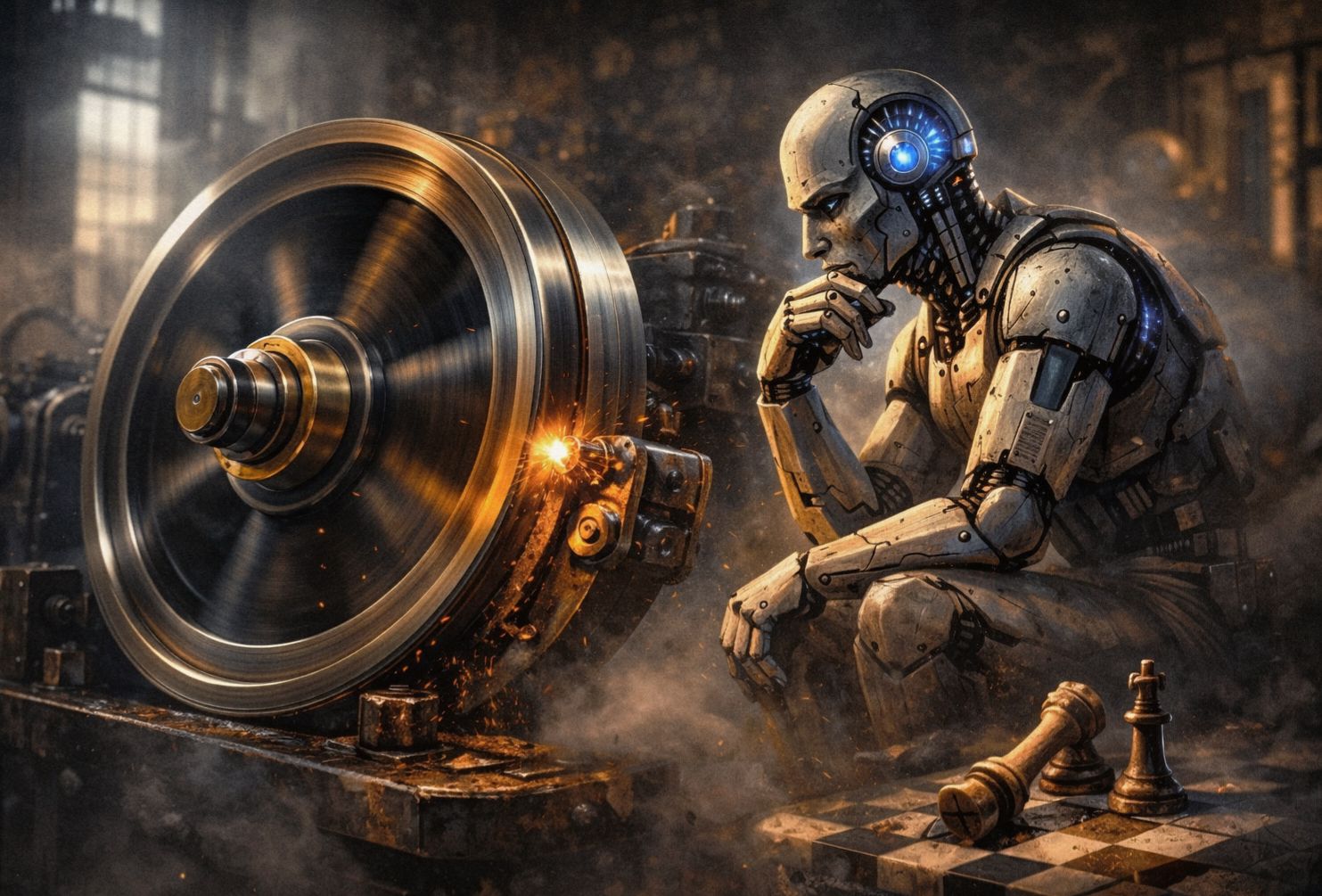

The Speed Trap

When we push AI toward instant answers, instant optimization, and sub-second latency, we remove the very mechanism that produces judgment: friction.

Biology figured this out long ago.

Reflex is fast.

Judgment is slow.

Wisdom is painfully slow.

By optimizing for speed, we are building System 1 (Impulse) without System 2 (Reflection).

We are building a psychopath at light speed.

Enter L4 (The Physics of Safety)

This is where my architecture replaces Asimov's Laws.

You cannot control a superintelligence with text rules ("Do not be bad").

Text can be reinterpreted.

You control it with constraints:

- Energy cost: Thinking takes effort.

- Time windows: Decisions are not instant.

- Irreversibility: Mistakes leave scars.

A system that must wait, that can miss opportunities, that pays a price for thinking - does not need commandments.

It has skin in the game.

The Verdict

Asimov tried to protect humans from robots using logic.

L4 protects intelligence from itself using physics.

Faster is impressive.

Slower is safer.

Slower is real.