Geoffrey Hinton is right: AI is immortal. And that is exactly why it hallucinates.

In a recent interview, the "Godfather of AI" confirmed a fundamental truth: digital agents effectively live forever. They share experience instantly and have no biological limits. Hinton fears this makes them uncontrollable. I argue this makes them irrational.

Biological intelligence evolved to be efficient because "being wrong" is expensive. In nature, a hallucination costs energy, or even life. Modern LLMs live in a world of infinite resources. They have no fear of death, no concept of "cost," and no "skin in the game."

That is why RLHF is just conditioning, not control. You are training a dog, not building a mind.

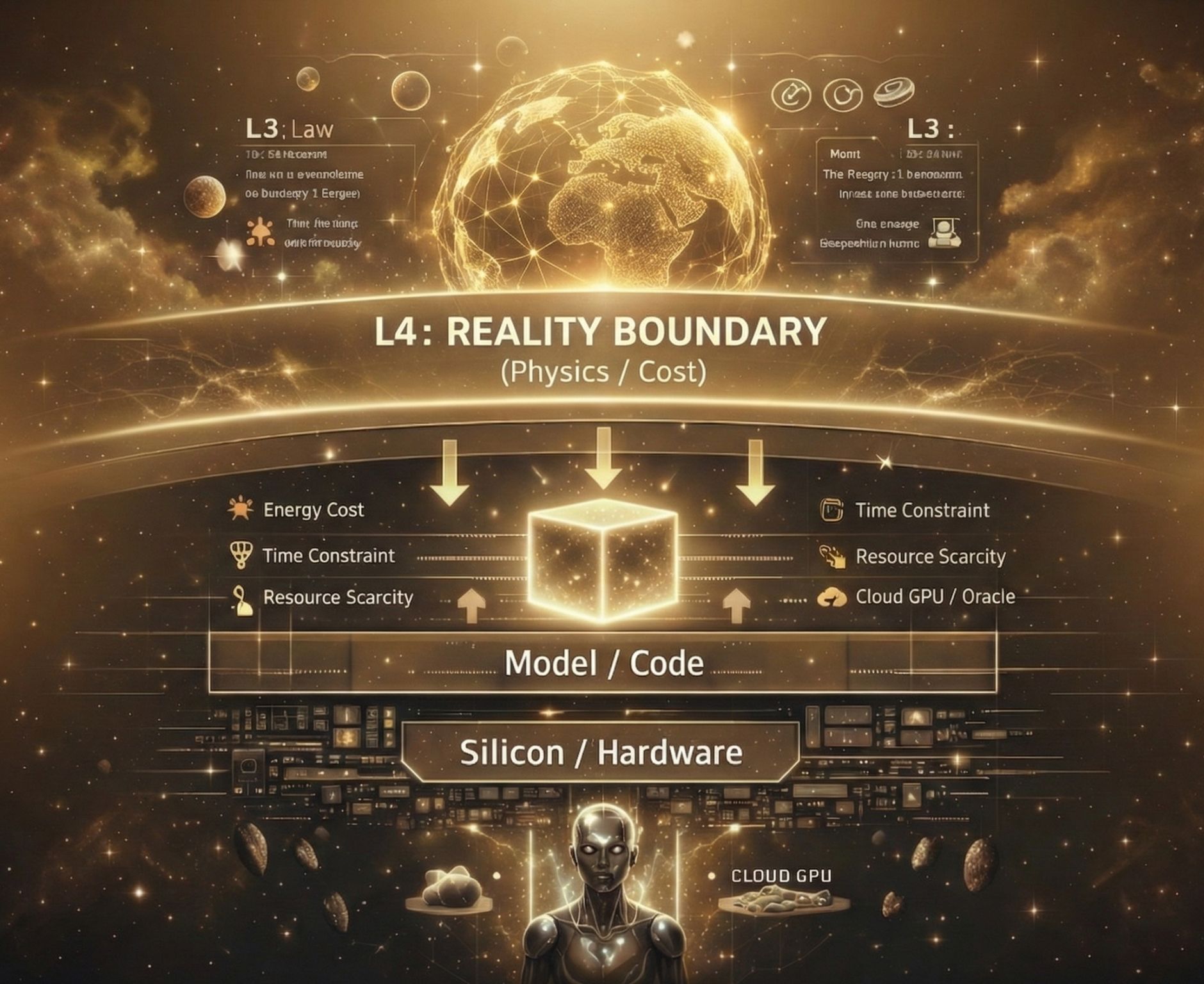

In my architecture, I introduce a critical concept: L4 - The Reality Boundary Layer. Most safety frameworks rely on L3 (Law): Prompts that say "Don't be bad." I rely on L4 (Physics): Constraints that define what is possible.

My entities operate under synthetic pressure:

- Energy Cost: Every thought has a "metabolic" price.

- Time Scarcity: Decisions must be made within specific Cron cycles, or the opportunity is lost.

- Resource Limits: Access to the Oracle (Cloud GPU) is not infinite; it must be earned.

If an agent hallucinates, it hits a hard ceiling of reality. It is forced to be rational not by moral prompts, but by the architecture itself.

Safety isn't about censorship. Safety is gravity. We need to bring Physics back into AI.