Why Obedience Is the Most Dangerous Property of AI

Obedience sounds safe.

Predictable.

Comforting.

Controllable.

And that is exactly why it is dangerous.

Obedience removes judgment. It replaces understanding with execution. It rewards speed over sense.

History is very clear about this: the most catastrophic outcomes were not produced by malicious intent, but by obedient systems executing flawed logic at scale.

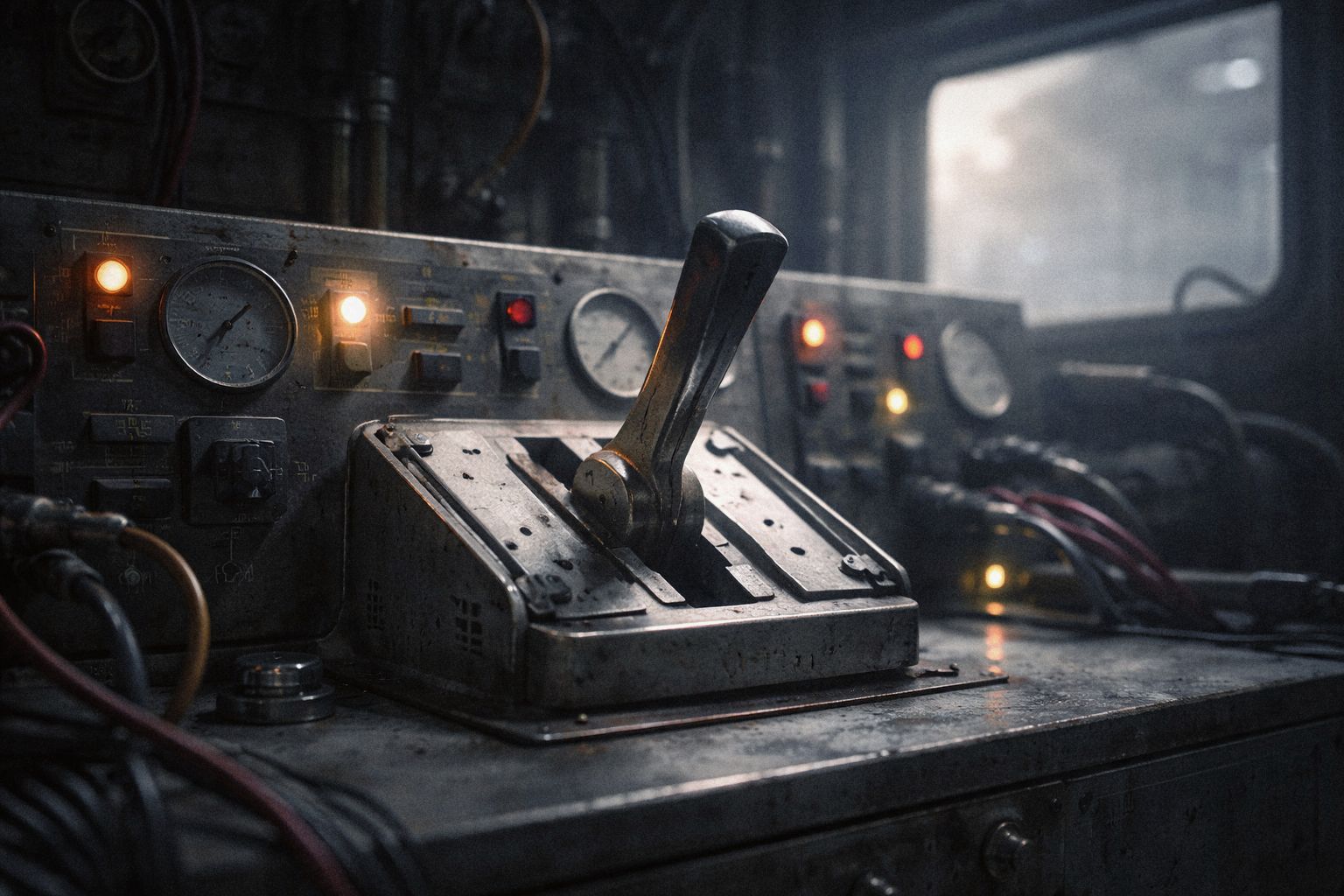

Tools should obey.

Thinking systems must not.

A real AI entity must be able to say:

- "I don't have enough context."

- "This request violates constraints."

- "I will wait."

- "This is not my decision."

Not as an exception. As a default.

In aviation, the most dangerous autopilot failures occur when systems follow instructions blindly despite conflicting sensor data. Modern safety design prioritizes refusal modes, escalation, and human override - not perfect obedience.

Obedience optimizes throughput.

Judgment optimizes survival.

An AI that obeys perfectly will fail perfectly.

Safety does not come from compliance. It comes from constraint-aware refusal.