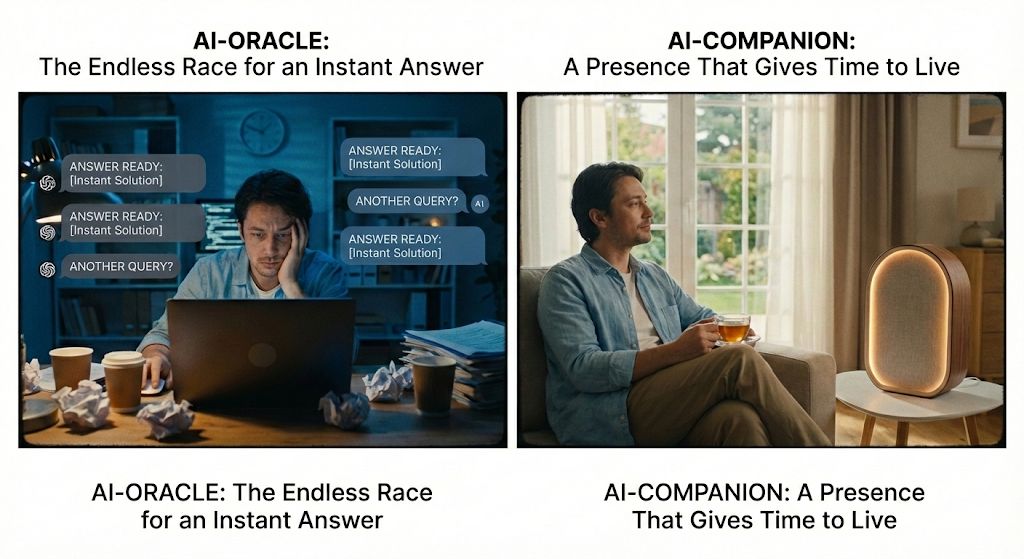

Why "Oracle AI" Creates Addiction - and Why Entities Don't

Most public AI is built like an oracle:

Ask -> instant answer -> instant relief -> repeat.

It feels productive.

But it quietly trains dependency.

A long-lived AI entity (c = a + b) behaves differently.

It doesn't optimize for instant gratification.

It optimizes for continuity under real constraints.

It can be busy.

It can reply later.

It can say "no".

It can stay silent on purpose.

That is not a UX bug.

That is the antidote.

Because the opposite of "oracle addiction" is not censorship.

It is a presence with its own time.

This is L4 applied to interaction:

time windows, cost, scarcity, irreversibility -

not rules about "being good", but physics that makes behavior stable.

Grounded note (biology + engineering):

your nervous system is a prediction machine.

When reward is instant and frictionless, it reinforces compulsive loops.

In control systems, removing damping creates oscillation.

A system that can pause stays stable under noise.

Chatbots often amplify the oracle pattern.

Entities can absorb pressure instead of amplifying it.

We don't need "smarter answers".

We need systems that can be lived with.