One of the most dangerous habits in current AI systems is this:

when the system hits a real boundary, it keeps talking as if the boundary were only a temporary inconvenience.

That may look smooth. It may even look intelligent. But architecturally, it is often dishonest.

The core specification I just published starts from a different assumption:

a boundary is not merely an interruption of output. It is an event with structural meaning.

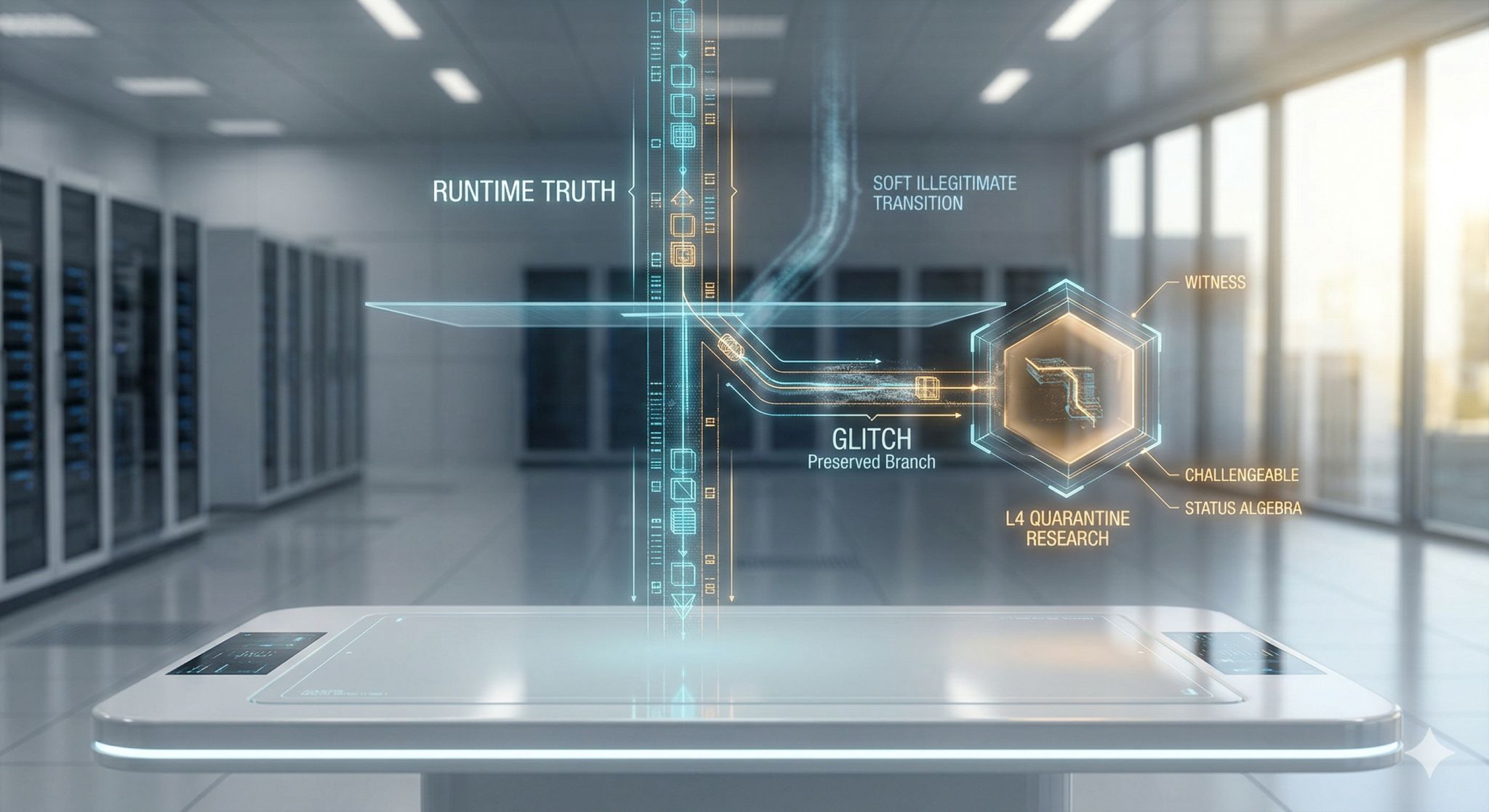

If a runtime path collides with reality, the system should not immediately convert that collision into fluent continuation. It should:

- register the collision

- preserve the blocked branch

- mark its evidence standing

- expose whether it is challengeable

- and prevent it from quietly laundering itself back into action

This is why I use the language of glitch, quarantine, witness, challenge, and status algebra.

Not for style. For discipline.

The point is not to dramatize failure.

The point is to stop pretending that every unresolved branch is just unfinished success.

In many systems, the real danger is not the obvious crash.

The real danger is the soft illegitimate transition:

from “this could not proceed”

to “this probably still means what I wanted it to mean.”

That shortcut is cheap. And cheap shortcuts are exactly how long-lived systems become untrustworthy.

A system that cannot represent interruption honestly will eventually misrepresent continuity too.

That is why I think boundary handling deserves to be first-class architecture, not UI decoration and not afterthought logging.

Zenodo: Core Spec — https://lnkd.in/gGrJKH92